The Graph Network In Depth - Part 2

This is the second part of a two part post exploring the design of The Graph Network. You can read here.

In the first post we gave an overview of what The Graph Network is, how it will look from an end user perspective and how it works at a high level.

In this post we’ll be going deeper on several key concepts, including how Graph Tokens are used for indexer staking, curation and indexer rewards. I’ll also introduce Graph Explorer—a dApp for interacting with the The Graph Network—and describe our payments and verification layers.

Indexer Staking

The Graph adopts a model, where Indexers must stake Graph Tokens in order to sell their services in the query market. This serves two primary functions:

- It provides economic security, as the staked GRT can be slashed if Indexers perform their work maliciously. Once GRT is staked, it may only be withdrawn subject to a thawing period, which provides ample opportunity for verification and dispute resolution.

- It provides a Sybil resistance mechanism. Having fake or low quality Indexers on a given subgraph makes it slower to find quality service providers. For this reason we only want Indexers who have skin in the game to be discoverable.

In order for the above mechanisms to function correctly, it’s important that Indexers are incentivized to hold GRT roughly in proportion to the amount of useful work they’re doing in the network.

A naive approach would be to try to make it so that each GRT staked entitles an Indexer to perform a specified amount of work on the network. There are two problems with this: first, it sets an arbitrary upper bound on the amount of work the network can perform; and second, it is nearly impossible to enforce in a way that is scalable, since it would require that all work be centrally coordinated on-chain.

A better approach has been pioneered by the team at 0x, and it involves collecting a protocol fee on all transactions in the protocol, and then rebating those fees to participants as a function of their proportional stake and proportional fees collected for the network, using the .

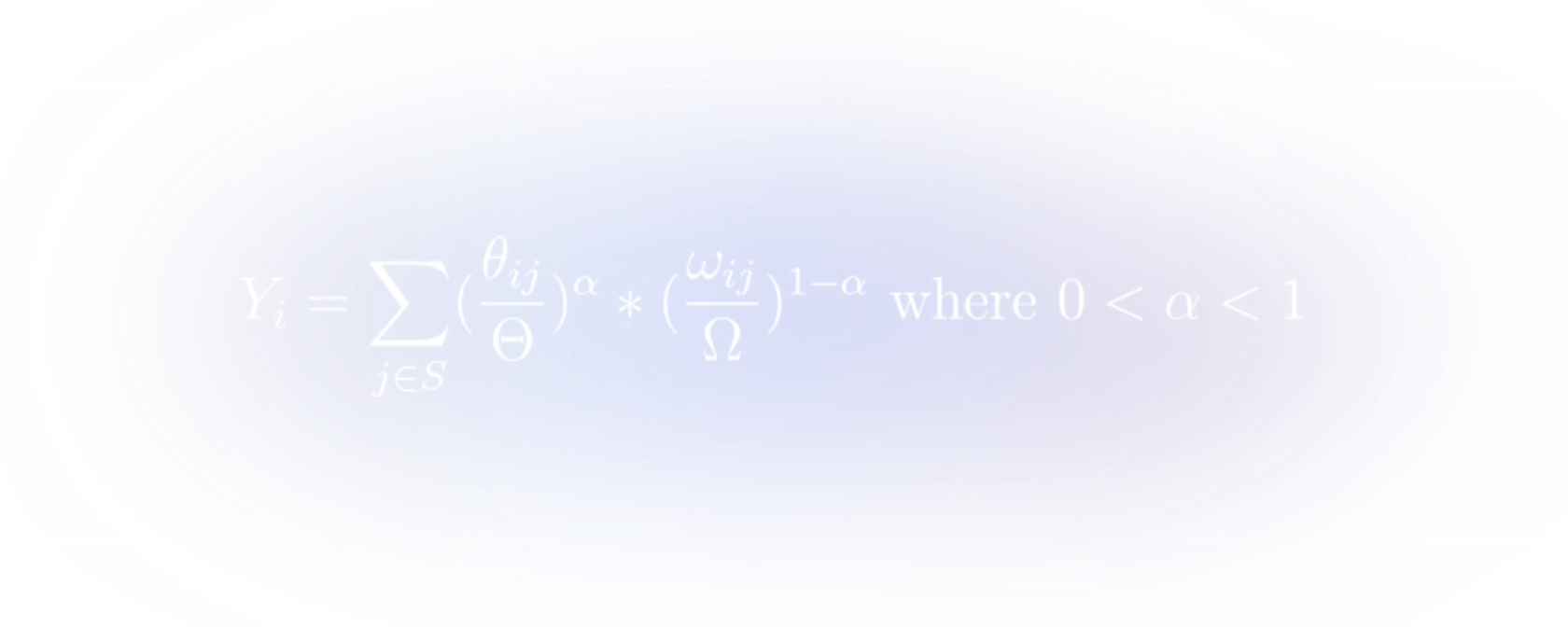

In our construction, the share of the rebate pool Yi an Indexer i receives during a time period is

where ωij is the amount of stake that Indexer i has allocated towards subgraph j, Ω is the total amount staked in the network, θij is the amount of query fees Indexer i has generated for the rebate pool on subgraph j and Θ is the total amount of query fees collected by the protocol.

I recommend reading — but what’s interesting for our purpose is that in equilibrium a rational decision maker would be expected to budget a stable proportion of their spending between the two inputs to the production function. In our case, that would be the cost of renting or owning GRT and the operating expenses related to running a Graph Node which allows an Indexer to perform more work, and by extension collect more query fees, for the network.

Because we would expect all rational Indexers to make an equivalent budgeting decision, in equilibrium, we should expect Indexers to stake a proportion of total GRT staked, equal to the proportion of work they have performed for the network.

The beauty is that this distribution of stake doesn’t need to be enforced in the protocol, but rather, it arises naturally from Indexers making decisions in their own economic best interest.

Delegation

For token holders who do not wish to sell their Graph Tokens, but want to put their tokens to productive use securing the network, the protocol introduces delegation.

A Delegator can “loan” their GRT to an Indexer, in return for a share of their query fees and indexer rewards, as specified by the Indexer.

In addition to increasing token participation, delegation presents an opportunity for smaller, capital-constrained Indexers, to be more competitive in the decentralized network by providing a high quality of service and attracting Delegators.

An important choice staking protocols must make with respect to delegation is whether delegateed stake can be slashed due to Indexer misbehaviors. In The Graph, delegated stake will not be slashable, because this encourages a trust relationship between Delegators and Indexers that could lead to winner-take-all mechanics and hurt decentralization.

Similar to that have made this choice, The Graph will enforce a limit on how much delegated stake an Indexer can accept for every unit of their own stake. This “delegation capacity” ensures that an Indexer is always putting a minimum amount of their own funds at stake to participate in the network.

Curator Signaling

For a consumer to query a subgraph, the subgraph must first be indexed—a process which can take hours or even days. If Indexers had to blindly guess which subgraphs they should index on the off-chance that they would earn query fees, the market would not be very efficient.

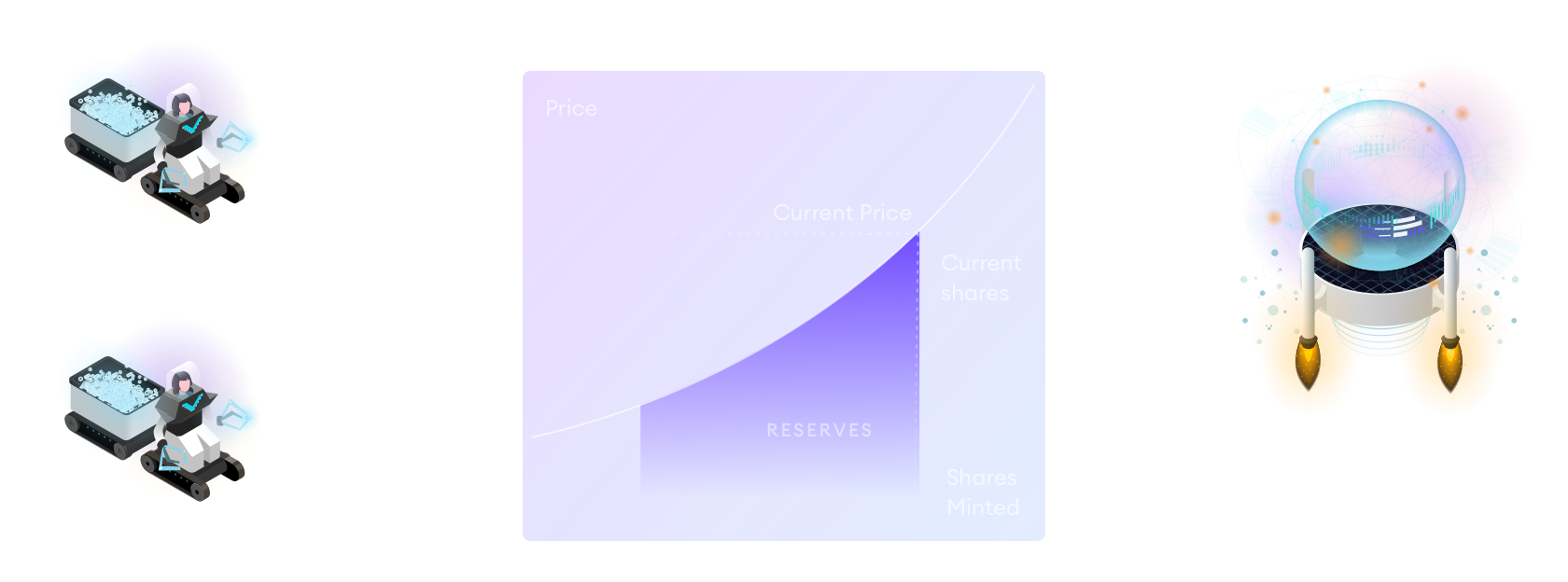

Curator signaling is the process of depositing GRT into a bonding curve for a subgraph to indicate to Indexers that the subgraph should be indexed.

Indexers can trust the signal because when curators deposit GRT into the bonding curve, they mint a curation share for the respective subgraph, entitling them to a portion of future query fees collected on that subgraph. A rationally self-interested curator should signal GRT toward subgraphs that they predict will generate fees for the network.

Using bonding curves—a type of algorithmic market maker where price is determined by a function—means that the more curation shares are minted, the higher the exchange rate between GRT and curation shares becomes. Thus, successful curators could take profits immediately if they feel that the value of future curation fees has been correctly priced in. Similarly, they should withdraw their GRT if they feel that the market has priced the value of curation shares too high.

This dynamic means that the amount of GRT signaled toward a subgraph should provide an ongoing and valuable market signal as to the market’s prediction for future query volume on a subgraph.

Indexer Reward

Another mechanism we employ related to indexer staking and curator signaling is the indexer reward.

This reward, which is paid through new token issuance, is intended to incentivize Indexers to index subgraphs that don’t yet have significant query volume. This helps to solve the bootstrapping problem for new subgraphs, which may not have pre-existing demand to attract Indexers.

The way it works is that each subgraph in the network is allotted a portion of the total network token issuance, based on the proportional amount of total curation signal that subgraph has. That amount, in turn, is divided between all the Indexers staked on that subgraph proportional to their amount of contributed stake.

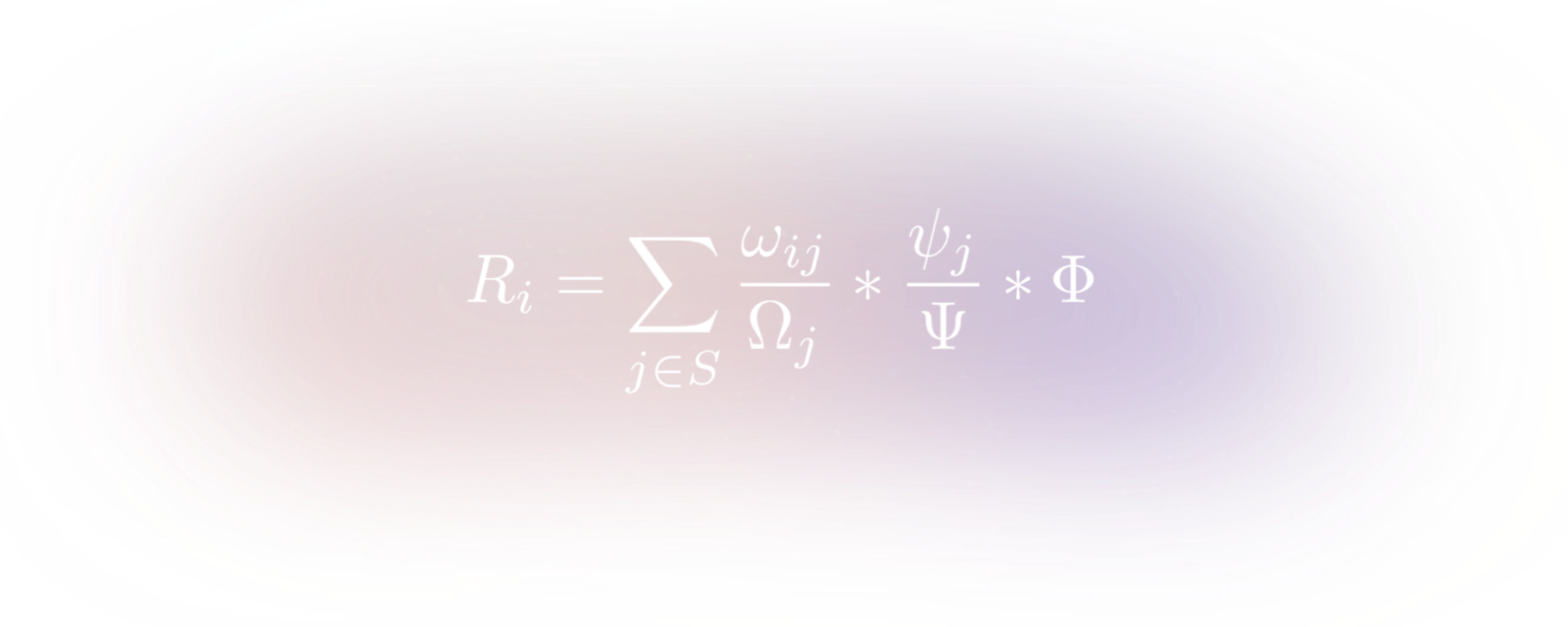

More formally, the indexer inflation reward for Indexer i is

where ωij is the amount that Indexer i has staked on subgraph j, Ωj is the total amount staked on subgraph j, ψj is the amount of GRT signaled for subgraph j, Ψ is the total amount signaled in the network and Φ is the total network indexer reward denominated in GRT.

SSetting the issuance rate dynamically in a way that is optimal is an area of ongoing research, but suffice to say that it will be low, most likely single digit.

This mechanism provides an additional incentive for Indexers to be responsive to the signal provided by curators, making curation an even more useful activity to engage in.

Verifiable Indexing

Given the protocol’s subsidization of subgraph indexing through the indexer reward, it’s important that the mechanism is used to subsidize useful work. For this, we introduce Proofs of Indexing and a Subgraph Availability Oracle.

The intent of both these mechanisms is to mitigate possible economic attacks where an Indexer attempts to collect the Indexer reward without providing useful work to the network. This could take the following forms:

- Not actually Indexing a subgraph that you are collecting rewards on.

- Indexing a subgraph that is broken or whose subgraph manifest is unavailable.

To mitigate #1, and some instances of #2, the protocol introduces Proofs of Indexing. In their current form, these are simply a signature over a message digest that is generated during the indexing of a subgraph from genesis. Each time a subgraph’s state is updated, so does the message digest.

When an Indexer goes to claim their indexer reward on a given subgraph, they must supply a recent Proof of Indexing to claim the reward. Because the Proof of Indexing (PoI) is computed from the Indexer’s signature, each Indexer must submit a PoI that is specific to them. Since Indexers compete for indexer rewards on a given subgraph, it is not in the best interest of Indexers to collude to help each other generate correct PoIs without actually doing the work.

These PoIs are accepted optimistically, in that they immediately unlock rewards, but they can be used later to slash an Indexer if they are found to be incorrectly formed. Slashing conditions could include deterministically attributable faults such as:

- A PoI that represents an incorrect state of a subgraph

- Providing a PoI on an invalid subgraph

In the first version of the network, an Arbitrator set through governance will decide disputes and has the power to end them in “draw” if a fault is not clearly attributable, or if it was the result of a software bug rather than malicious behavior by the Indexer. The decisions of an Arbitrator will be based on the protocol specification as well as an “arbitration charter” which outlines how disputes should be settled.

Another type of fault, which is completely subjective due to , is whether or not a subgraph manifest is available. If a subgraph manifest is unavailable, then it becomes impossible for an Arbitrator to settle any of the other disputes for that subgraph, and also becomes impossible for other Indexers to compete for indexer rewards on that subgraph.

For this use case, we introduce a Subgraph Availability Oracle, also set through governance. The oracle will look at several prominent IPFS endpoints, such as the Cloudflare IPFS Gateway, to determine whether a subgraph manifest is available. If a subgraph manifest is unavailable, then that corresponding subgraph will not be eligible for any indexer rewards.

Graph Explorer and Graph Name Service

Curating subgraphs for Indexers is only half of the story when it comes to surfacing valuable subgraphs. We also want to surface valuable subgraphs for developers.

This is one of the core value propositions of The Graph—to help developers find useful data to build on and make it effortless to incorporate data from a variety of underlying protocols and decentralized data sources into a single application.

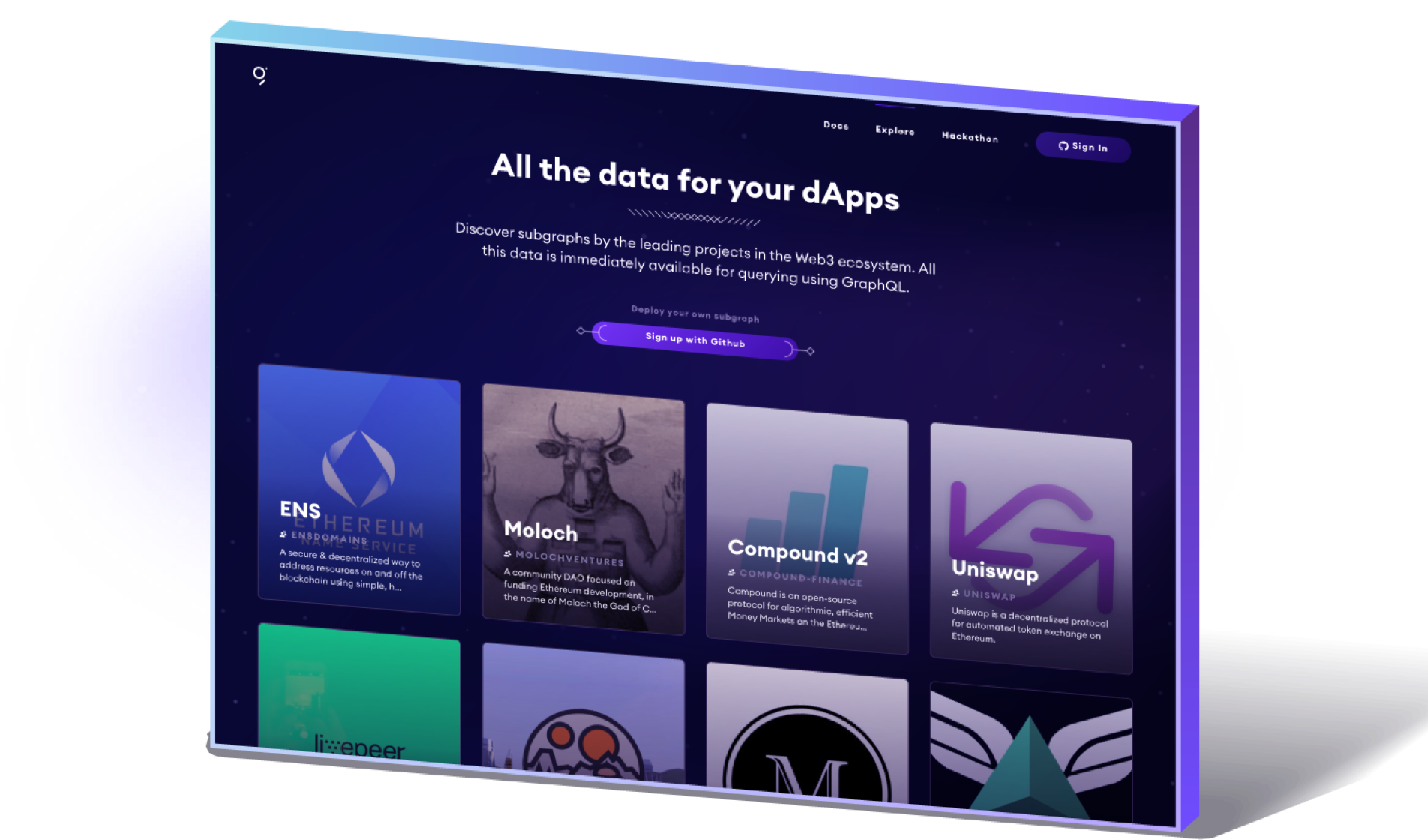

Currently, developers accomplish this by navigating to Graph Explorer:

In The Graph Network, Graph Explorer will be a dApp, built on top of a subgraph that indexes The Graph’s protocol smart contracts (meta, I know!)—including the Graph Name Service (GNS), an on-chain registry of subgraphs.

A subgraph is defined by a subgraph manifest, which is immutable and stored on IPFS. The immutability is important for having deterministic and reproducible queries for verification and dispute resolution. The GNS performs a much needed role by allowing teams to attach a name to a subgraph, which can then be used to point to consecutive immutable subgraph “versions.”

These human readable names, along with other metadata stored in the GNS, allows users of Graph Explorer to get a better sense for the purpose and possible utility of a subgraph in a way that a random string of alphanumeric characters and compiled WASM byte code does not.

In The Graph Network, discovering useful subgraphs will be even more important, as a proposed future update to the protocol is subgraph composition. Rather than simply letting dApps build on multiple subgraphs, subgraph composition will allow brand new subgraphs to be built that directly reference entities from existing subgraphs.

This reuse of the same subgraphs across many dApps and other subgraphs is one of the core efficiencies that The Graph unlocks. Compare this approach to the current state of the world where each new application deploys their own database and API servers, which often go underutilized.

Signal Migration

The Graph Name Service (GNS) also exposes curation functionality. However, rather than signaling on immutable subgraphs, as is the case in the core protocol, the GNS supports signaling on mutable subgraph names. Tokens that are signaled on a subgraph name are automatically migrated to the latest version when the subgraph owner updates versions. This is akin to delegating a signal to the owner of a named subgraph (not to be confused with delegating stake to an Indexer).

This can provide a benefit to both Curators and subgraph developers. The subgraph developer is able to attract more Indexers to index their new subgraph version than they would be able to with just their own signal. The Curators, meanwhile, are guaranteed to always be signaled on the version that the subgraph developer intends to direct query traffic to.

There are also risks, however, to signaling on a name. First, there is a curation tax that is charged to both the Curator and the subgraph owner on upgrade. Normally, this tax is paid only by the Curator on deposit, but the Curator is in control of when they signal and unsignal. While it would not be in a subgraph owner’s best interest to repeatedly upgrade versions–if they are forced to do so, for example to fix bugs in their subgraph, this would drain a portion of Curators’ signal on each migration.

Another risk is that the subgraph owner is not in control of the primary source of query traffic on the subgraph. In that case, a Curator whose signal is auto-migrated to a new version may miss out on a share of query fees that are still being sent to a previous version of the subgraph.

Conditional Micropayments

Our payments layer is designed to minimize trust between the consumer and the Indexer. Payment channels is a technology that has been developed for scalable, off-chain, trust-minimized payments. It involves two parties locking funds on-chain in an escrow where the funds may only be used to exchange funds off-chain between them until a transaction is submitted on-chain to withdraw funds from the escrow.

Traditionally, payment channel designs have emphasized securely sending a micropayment off-chain without regard for whether or not the service or good being paid for was actually received.

There has been some work, however, toward atomic swaps of micropayments for some digital good or outsourced computation, which we build on here. We call our construction WAVE Locks. WAVE stands for work, attestation, verification, expiration, and the general design is as follows:

- Work. A consumer sends a locked micropayment with a description of the work to be performed. This specification of the work acts as the lock on the micropayment.

- Attestation. A service provider responds with the digital good or service being requested along with a signed attestation that the work was performed correctly. This unlocks the micropayment optimistically, on the assumption that the attestation is correct.

- Verification. The attestation is verified using some method of verification. There may be penalties, such as slashing, for attesting to work which was incorrectly performed. Verification of the attestation happens out-of-channel.

- Expiration. The service provider must either receive a confirmation of receipt from the consumer or submit their attestation on-chain to receive their micropayment before the locked micropayment expires.

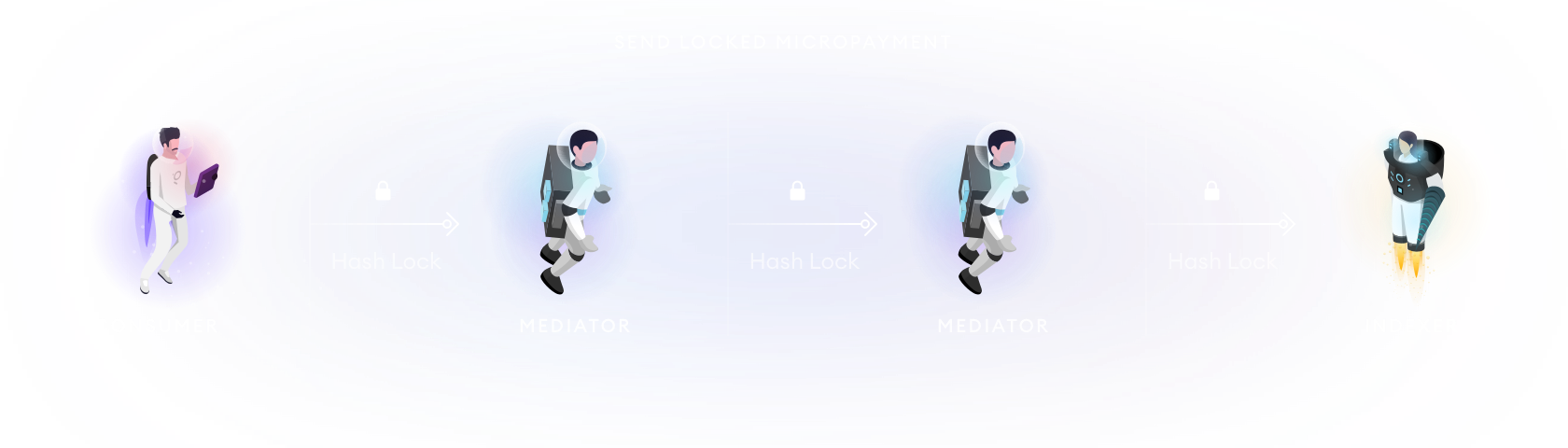

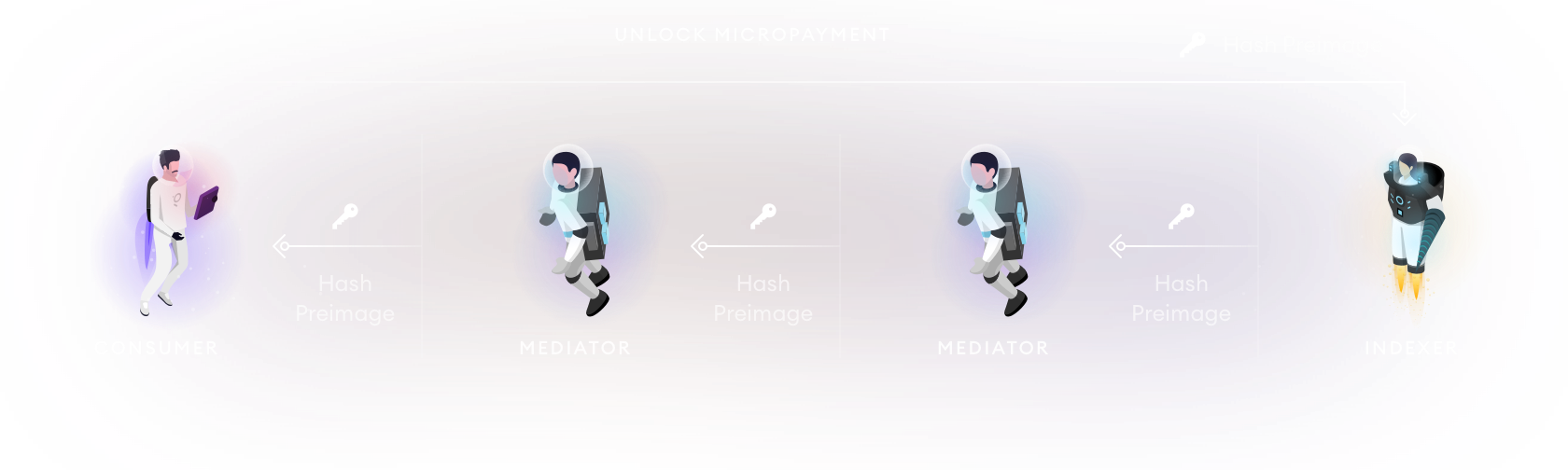

Using locks with payment channels is not new. Both Lightning and Raiden papers discuss using a hash preimage to unlock a micropayment. This is particularly useful for multi-hop micropayments where each “hop” is locked with the same hash and can be unlocked by a value, the preimage, that produces that hash when input to a specified hashing function.

Example of multi-hop payment channels using a hash preimage to unlock payments.

While we could roll our own payment channel solution, which is purpose-built with this new locking mechanism, a more pragmatic solution is to use state channels.

We can think of state channels being to payment channels, what smart contract blockchains like Ethereum are to Bitcoin. They can handle the simple payments use case, but they can also codify more complex state transitions while keeping the same scalability and security properties as a payment channel.

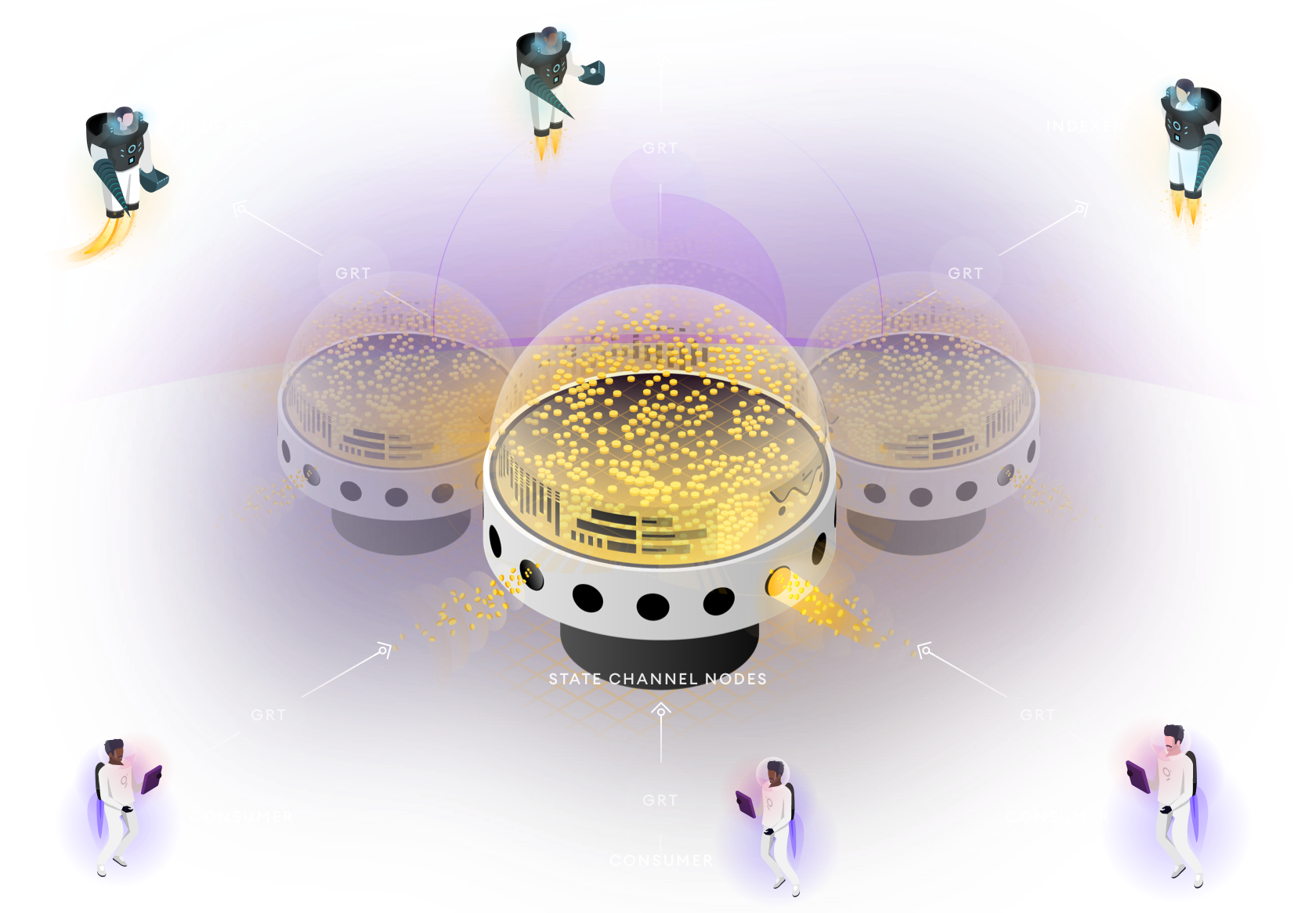

What payment and state channels have in common, however, is that in their most basic form they are a means of exchanging value or state updates between two participants, which are known ahead of time. As alluded to above with the multi-hop micropayments, sending between any two participants requires being able to form a chain of payment channels across multiple other participants, which connects the two original participants.

The Graph protocol will support Consumers and Indexers connecting via direct channels or via a network of state channel nodes as shown above. For simplicity, the default client implementations at launch will use direct channels.

One advantage of having a state channel network available, aside from the scalability benefits, is that Consumers could use 3rd party off-chain market makers to buy GRT in exchange for ETH or the stable coin of their choice, just in time to pay for queries. This reduces balance sheet risk for Consumers that prefer to hold an asset whose value doesn’t fluctuate.

Query Verification

In order for the WAVE Locks construction and indexer staking to be meaningful, there must be an effective verification mechanism that is capable of reproducing the work performed by an Indexer, identifying faults and slashing offending Indexers.

In the first phase of The Graph Network, this is handled through an on-chain dispute resolution process, which is decided through arbitration.

For queries, we support two types of disputes:

- Single Attestation Disputes

- Conflicting Attestation Disputes

In single attestation disputes, Fishermen submit disputes along with a bond, as well as an attestation signed by an Indexer. If the Indexer is found to have attested to an incorrect query response, the fisherman receives a portion of the slashed amount as a reward. Conversely, the fisherman’s bond is forfeit if the dispute is unsuccessful.

Importantly, the fisherman’s reward must be less than the slashed amount. Otherwise, malicious Indexers could simply slash themselves to get around thawing periods or avoid slashing by someone else.

For single attestation disputes, the Fisherman is assumed to be an actor who got their hands through some extra-protocol means, for example a third party logging service.

We expect the far more common use case to be conflicting attestation disputes. In this case, a Fisherman can submit two attestations for the same query, signed by two different Indexers. If the attestations don’t agree with one another, then it’s guaranteed that one or both Indexers committed a fault.

Consumers, when querying The Graph, may choose to intermittently query multiple Indexers for the same query results–for extra security and the chance of winning a portion of their slashed stake as a reward. This strategy works better as the number of unique Indexers on a subgraph increases.

In the first version of the network, there will be an Arbitrator set via protocol governance, which will decide the outcome of disputes. Similar to indexing disputes, the Arbitrator may exercise judgment when incorrect queries may arise as a result of bugs in the software, Indexers missing events from the blockchain, or other accidental factors that could lead to a slashable offense. Arbitration should settle disputes according to the protocol specification as well as the aforementioned arbitration charter.

Eventually, as the software matures, Indexers will be expected to develop the operational know-how to avoid these sorts of errors.

Future Work

After network launch, The Graph core team will be passing off it’s mantle as sole stewards of the protocol to on-chain decentralized governance called The Graph Council. You can learn more about it in this , but the TLDR is that future improvements to the protocol will go through a decentralized proposal process in which we will be active participants.

The future work outlined below represents a vision for the protocol that we’ll be advocating for as participants in that decentralized process, but is not a guarantee of the path the protocol will take, which is ultimately up to the stakeholders of the protocol.

Subgraph Composition

It should be possible to query entity relationships across subgraphs, and also build subgraphs that process data from other subgraphs. This will do for the open data layer of Web3 what smart contracts and “money legos” did for DeFi.

Multi-Blockchain Support

The Graph hosted service currently supports several Ethereum compatible chains and layer 2s, but The Graph protocol that is deployed to Ethereum mainnet will initially only support indexing Ethereum mainnet. Making sure the protocol incentives can be made to function, without being gameable, for indexing and serving queries on other chains is an area of future research.

Optimistic Rollup/ Truebit-style Verification for Proofs of Indexing

Most of the work of indexing a subgraph already takes place in WASM or in a language that can compile to WASM. This lends itself to verifiably computing these at layer 2, using some form of bisection protocol and refereed game as is done in Optimistic Rollup and Truebit. This would allow us to eliminate the Arbitrator’s role in indexing disputes.

Verifiable Queries

Rather than rely on Arbitration for settling query disputes, the validity of queries could be guaranteed using cryptographic proofs. A good first step here would be to implement verifiable Proofs of Indexing as discussed in the previous paragraph. This lays the foundation for the design of PoIs to evolve from being a simple signature over a digest, to being a useful input for verifying queries, such as a polynomial commitment, Merkle tree or some other authenticated data structure.

Optimistic Rollup/ Layer 2 for Protocol Economics

The protocol’s current use of state channels makes the network fairly robust to increased Ethereum gas costs and means that gas costs won’t scale dramatically as usage of the network increases. That being said, there are benefits to being able to settle state channels more frequently and have reward calculations be executed more regularly, which would be feasible on a layer 2 chain.

GraphQL Mutations

GraphQL Mutations provide a more semantically meaningful way to interact with a protocol than a smart contract ABI which is lower-level and whose methods don’t always map to a single conceptual action. Bundling GraphQL mutations with subgraphs will make it dramatically easier for a new developer to start interacting with on-chain protocols, in the same way The Graph has already lowered the bar for consuming data from said protocols.

GraphQL API V2

There are a handful of improvements we’ve identified based on the needs of subgraph developers. These include more efficient pagination, nested sorting and filtering and analytics functionality such as time-series bucketing.

Protocol Modeling & Simulation

We will continue to build on work started earlier this year in collaboration with the BlockScience team to better model the protocol and simulate different protocol parameter choice and possible upgrades. Data currently being collected as part of the currently active Mission Control testnet will be instrumental in this process.

Automated Monetary Policy

In the first version of the network, the issuance rate of Graph Tokens that is used to pay indexer rewards is set via governance. Long term, our goal is to reduce the governance surface area as much as possible. The aforementioned modeling and simulation work will be helpful towards that end. Monetary policy, with its potential to advantage certain stakeholders over others, is a ripe target for an automated rule that removes control from governance, keeping in line with The Graph’s governing principle of “progressive minimization” introduced in The Graph Council introductory blog post.

Conclusion

Thanks for reading! I hope this illuminates the rationale behind the choices we made while designing the network and explains what to expect from The Graph when it launches on mainnet.

If you have any questions, or want to get started building a subgraph today, join The Graph .